Kubernetes is a popular container-orchestration platform that can help you run complex and distributed applications. AWS EKS makes it easy to deploy Kubernetes on Amazon Web Services, with no up-front investment required from developers or operations staff. In this tutorial, we’ll walk through how to build a cluster locally before deploying to the cloud.,

The “aws eks add nodes to cluster” is a command-line tool that allows users to create clusters of Kubernetes instances. This article will teach you how to use the AWS EKS CLI tool to create a Kubernetes cluster with the AWS EKS service.

If you’re a developer, you’ll most likely want to use Kubernetes to deploy containerized apps. But how, specifically? Why not try the AWS EKS CLI?

You’ll learn how to use AWS EKS CLI to construct a Kubernetes cluster in this tutorial, so you can concentrate on your code rather than infrastructure management.

Continue reading to get started on your cluster now!

Prerequisite

This will be an interactive presentation. If you want to follow along, make sure you have a computer and an Amazon Web Services account. A free tier account is available if you don’t already have an AWS account.

Making an Administrator User

You’ll need to create an admin account before you can start building a Kubernetes cluster. You may connect into the AWS interface as an admin account to setup your cluster. Create a user with administrator access in the AWS Console to begin this lesson.

1. Log in to the AWS Console and go to the IAM dashboard.

Click on Users (left panel) —> Add Users (top-right) shown below to initialize adding users.

Creating the First User

Creating the First User

2. Next, in the User name area, type K8-Admin, select the Access key – Programmatic access option, and then click Next: Permissions.

Because it’s programmatically available, you’ve chosen the Access key – Programmatic access option. As a consequence, an application may directly communicate with AWS about what steps to take.

Configuring User Information

Configuring User Information

3. Select the option to directly attach existing policies, check the AdministratorAccess policy, and then select Next: Tags.

The AdministratorAccess policy grants the following permissions to the user (K8-Admin):

Creating policies for AdministratorAccess

Creating policies for AdministratorAccess

4. To avoid adding tags, click Next: Review.

Getting rid of the tags screen

Getting rid of the tags screen

5. Finally, examine the user information and click Create user to complete the admin user creation.

Creating an administrator user

Creating an administrator user

When the admin user is created, you’ll get a Success message like the one below at the top of the screen. Keep track of the Access key ID and Secret access key since you’ll need them to log in later.

Viewing the administrator user keys

Viewing the administrator user keys

Creating an Amazon EC2 Instance

You may now construct your first EC2 instance once you’ve created the K8-Admin. This instance will serve as your master node, from which you’ll perform your cluster-building instructions.

1. Go to your EC2 dashboard, click EC2, and then Launch Instances in the upper right corner. This will take you to a screen where you may choose an Amazon Machine Image (AMI) (step two).

Creating an Amazon EC2 Instance

Creating an Amazon EC2 Instance

2. Then, as shown below, choose Amazon Linux 2 AMI (HVM) from the list alongside (right-most).

The Linux kernel 5.10 in the Amazon Linux 2 AMI (HVM) is optimized for the newest generation of hardware. Many features needed by production-level Kubernetes clusters are included in this AMI.

Choosing the Amazon Linux 2 AMI (HVM)

Choosing the Amazon Linux 2 AMI (HVM)

3. To setup the instance, leave the instance type as default (t2.micro) and click Next: Configure Instance Details.

Viewing the instance type in action

Viewing the instance type in action

4. Click Next: Add Storage after enabling the Auto-assign Public IP option. This option guarantees that each of your containers has access to your Kubernetes master node’s public IP as well as your EC2 instances.

Configuring the instance information

Configuring the instance information

5. In the Add Storage tab, keep the default (Root) and click Next: Add tags. To read and write data from inside the instance, the Root volume is necessary.

Setting up the storage

Setting up the storage

6. Click Next: Configure Security Group instead of inputting tags.

Examining the tags

Examining the tags

7. Keep the security group’s settings, as indicated below, and click Review and Launch.

The Security Group in Detail

The Security Group in Detail

8. Review the instance launch information before pressing the Launch button to start the instance. You’ll be given the option of selecting a current key combination or creating a new one in a pop-up window (step nine).

Creating a new instance

Creating a new instance

9. In the dialog box that appears, set the key pair as follows:

- Select In the dropdown box, create a new key pair.

- As the key pair type, choose RSA.

- Choose a name for your key pair. The key pair name is set to my-nvkp for this tutorial.

- Then choose Download Key Pair and Launch Instances.

Making a new pair of keys

Making a new pair of keys

It may take a minute or two for your instance to fully start. When your instance is up and running, it will appear on your EC2 dashboard, as seen below.

Seeing the newly-created instance in action

Seeing the newly-created instance in action

AWS CLI Tool Configuration

It’s time to set up the command line (CLI) tools now that your instance is up and running. In order to build your Kubernetes cluster, you’ll need to use the CLI tools in combination with your AWS account.

1. Check the box to choose the instance from your EC2 dashboard, as shown below. To begin Getting connected to the instance, click Connect.

The Ec2 instance is being connected to.

The Ec2 instance is being connected to.

2. Then, to connect to the instance you chose in step one, click the Connect button.

Getting connected to the instance

Getting connected to the instance

Your browser will redirect to the interactive terminal displayed below as your temporary SSH connection with your EC2 instance after you’ve connected to it.

You may connect to the command line and perform administrative tasks on your new instance using the interactive terminal.

The interactive terminal in action

The interactive terminal in action

3. Check your CLI version with the aws command.

As you can see from the results below, your Amazon Linux 2 instance is running version 1.18.147, which is out of date. To utilize all of the Kubernetes capabilities, you must download and install AWS CLI version 2+. (step three).

Verifying AWS CLI version

Verifying AWS CLI version

4. Now, use the curl command to download the CLI tool v2+ and store it in the awscliv2.zip zip file.

curl -o “awscliv2.zip” “https://awscli.amazonaws.com/awscli-exe-linux-x86 64.zip”

Obtaining the CLI tool version 2+

Obtaining the CLI tool version 2+

5. Unzip the supplied file and identify where the old AWS CLI is installed using the instructions below.

unzip the awscliv2.zip file.

The old AWS CLI is installed at /usr/bin/aws, as seen in the output below. This route must be updated with the latest version.

Updates to the AWS CLI

Updates to the AWS CLI

6. To execute the following and –update the AWS CLI’s install path on your instance, use the command below:

- Install the new AWS CLI tools (sudo./aws/install) on your Amazon Linux 2 instance.

- Set the installation location for the CLI tools (—install-dir /usr/bin/aws-cli). This allows you to move the updated AWS CLI to other instances without having to reinstall the tools.

- If you haven’t already, update (—update) your existing shell environment with the new route for AWS CLI tools.

—bin-dir /usr/bin —install-dir /usr/bin/aws-cli —update sudo./aws/install

CLI Tool v2 Installation

CLI Tool v2 Installation

7. Check that the latest AWS CLI is installed appropriately by using the aws —version command below.

As indicated below, the AWS CLI version installed is 2.4.7, which is the most recent AWS CLI version at the time of writing.

Checking the latest AWS CLI version

Checking the latest AWS CLI version

8. Finally, use the aws configure command to set up your instance using the new AWS CLI tools.

Fill in the blanks with the necessary values as shown below:

- AWS Access Key ID [None] – This is the Access Key ID you recorded in the preceding section, “Creating Your Admin User.”

- Enter the Secret Access Key you specified in the preceding “Creating Your Admin User” section.

- [None] is the default region name. Choose one of the supported regions, such as us-east-1.

- Default output format [None] — Replace none with json, since JSON is the recommended format for Kubernetes.

Setting Up the AWS Environment

Setting Up the AWS Environment

Amazon EKS Command-Line Tool Configuration (eksctl)

Because your objective is to use AWS EKS CLI to construct a Kubernetes cluster, you’ll also need to set up the Amazon EKS (eksctl) command-line tool. Create and manage Kubernetes clusters on Amazon EKS using this utility.

1. On your EC2 instance, install the latest version of the Kubernetes command-line tool (kubectl). You may use this tool to perform commands on Kubernetes clusters.

2. Next, use curl to download the newest eksctl release from GitHub as a.tar.gz file to your /tmp directory, then extract the archive content into the /tmp directory.

To do the following, run the instructions below:

- Retrieve the latest eksctl release from GitHub (–location) as .tar.gz archive (“<https://github.com/weaveworks/eksctl/releases/latest/download/eksctl_$>(uname -s)_amd64.tar.gz”)

- The —silent parameter disables the command’s progress output while extracting the archive’s content to the /tmp directory (tar xz -C /tmp).

- Move the eksctl binary (/tmp/eksctl) to the /usr/bin directory where you installed the AWS CLI (sudo mv).

tar xz -C /tmp sudo mv /tmp/eksctl /usr/bin | curl —silent —location “https://github.com/weaveworks/eksctl/releases/latest/download/eksctl $(uname -s) amd64.tar.gz”

3. Finally, execute the command below to verify that eksctl was successfully installed.

The result below verifies that eksctl was successfully installed.

Verifying the version of the eksctl CLI utility

Verifying the version of the eksctl CLI utility

If you’re new to eksctl, use the command below to get a list of all available eksctl commands and how to use them.

eksctl help page in preview

eksctl help page in preview

Setting up your EKS Cluster

You may now use eksctl commands to setup your first EKS Cluster once you’ve configured eksctl.

To construct your first cluster, use the eksctl command below and follow the steps:

- Create a 3-node Kubernetes cluster called dev, with one t3.micro node type and us-east-1 region.

- For this EKS-managed node group, provide a minimum of one node (—nodes-min 1) and a maximum of four nodes (—nodes-max 4). Standard-workers is the name of the node group.

- Select a machine type for the standard-workers node group and give it the name standard-workers.

—name dev —version 1.21 —region us-east-1 —nodegroup-name standard-workers —node-type t3.micro —nodes eksctl create cluster 3 —nodes-max 4 —managed —nodes-min 1

Setting up your EKS Cluster

Setting up your EKS Cluster

2. Go to your CloudFormation dashboard to observe what activities the command has made. CloudFormation is used by the eksctl create cluster command to provision infrastructure in your AWS account.

Using AWS CLI and CloudFormation to Deploy Infrastructure

An eksctl-dev-cluster CloudFormation stack is being generated, as you can see below. This procedure might take up to 15 minutes to complete.

The eksctl-dev-cluster stack is being previewed.

The eksctl-dev-cluster stack is being previewed.

3. Go to your EKS dashboard and look for a cluster called dev provisioned. To access dev’s EKS Cluster dashboard, click the dev hyperlink.

Accessing the development EKS Cluster dashboard.

Accessing the development EKS Cluster dashboard.

The dev’s EKS cluster data are shown below, including Node name, Instance type, Node Group, and Status.

The dev EKS Cluster dashboard in action.

The dev EKS Cluster dashboard in action.

4. Go to your AWS account’s EC2 dashboard and you’ll notice four nodes operating, three of which have the t3.micro role (three worker nodes and one master node).

The EC2 dashboard is being previewed.

The EC2 dashboard is being previewed.

5. Finally, update your kubectl config (update-kubeconfig) with your cluster endpoint, certificate, and credentials using the command below.

aws eks news —name dev —region us-east-1 -kubeconfig

kubectl configuration update

kubectl configuration update

EKS Cluster Application Deployment

You’ve set up your EKS cluster and verified that it’s up and working. However, the EKS cluster is now parked in the corner. You’ll utilize the EKS cluster to install an NGINX application for this demonstration.

How to Test Your NGINX Configuration Before Making a Mistake

1. Install git using the yum command below, accepting all prompts automatically (-y) throughout installation.

Git Installation

Git Installation

2. After that, use the git clone command to copy the configuration files from the GitHub repository to your current directory. These files will be used to install NGINX on your pods and set up a load balancer (ELB).

https://github.com/Adam-the-Automator/aws-eks-cli.git git clone

The configuration files are cloned.

The configuration files are cloned.

3. Move into the ata-elk directory and create (kubectl apply) a service for NGINX (./nginx-svc.yaml) using the following instructions.

cd ata-elk # Change directory to ata-elk # kubectl apply -f./nginx-svc.yaml applies the configuration in./nginx-svc.yaml to a pod

Developing an NGINX service

Developing an NGINX service

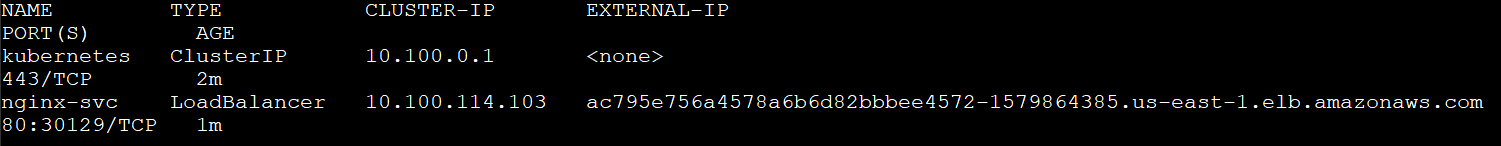

4. Check the status of your NGINX service using the kubectl get service command.

The service type is a load balancer, and Kubernetes generated a service (nginx-svc) for your NGINX deployment, as seen below. Under the EXTERNAL IP column, you can also see the external DNS hostname of the load balancer established by EKS.

Note down the load balancer’s external DNS hostname; you’ll need it later to test the load balancer.

Getting a status update on your NGINX

Getting a status update on your NGINX

5. To deploy the NGINX pods, use the kubectl command below.

apply -f./nginx-deployment.yaml kubectl

The NGINX pods are being deployed.

The NGINX pods are being deployed.

6. To verify the status of your NGINX deployment and NGINX pod, use the kubectl get instructions below.

obtain deployment kubectl obtain pod kubectl

As you can see in the output below, your deployment has three pods, all of which are operational.

Checking the NGINX deployment and pods’ status

Checking the NGINX deployment and pods’ status

7. Examine the health of your worker nodes. using the kubectl get node command.

Examine the health of your worker nodes.

Examine the health of your worker nodes.

8. Now, run the curl command below to test your load balancer. Replace <LOAD_BALANCER_DNS_HOSTNAME> with the DNS name you previously noted (step five).

curl “<LOAD_BALANCER_DNS_HOSTNAME>”

As demonstrated below, the NGINX welcome page from EKS’s NGINX service is shown. The output below verifies that your load balancer is operational and that you can access your NGINX pods.

Performing a load balancer inspection

Performing a load balancer inspection

9. Finally, copy and paste the load balancer’s DNS name into a new browser tab for double-checking.

NGINX will also display a welcome page, indicating that your application is operational.

Performing a load balancer inspection with a browser

Performing a load balancer inspection with a browser

Testing Kubernetes Control with High Availability

You’ll check whether the Kubernetes control plane is highly available now that you have a cluster up and running. This functionality determines the uptime of your application. Your apps will be unavailable and unable to serve users if the control plane fails.

You may improve the availability of your application by using the highly available Kubernetes control function. Stopping your EKS worker nodes and seeing whether Kubernetes sets up new nodes to replace the failing ones is how you’ll test this capability.

1. Stop all of your EKS worker node instances on your EC2 dashboard, as seen below.

Stopping all instances of EKS worker nodes

Stopping all instances of EKS worker nodes

2. Check the status of the worker node using the following command.

Pending, Running, and Terminating are among the statuses you’ll get. Why? Because Kubernetes recognizes the problem and promptly puts up another node as you attempt to shutdown all the worker nodes.

Checking the worker node’s status

Checking the worker node’s status

3. To test the highly accessible Kubernetes control capability, use the kubectl get pod command.

Three fresh pods (identified by age) are in the Running stage, as shown in the output. These new pods show that Kubernetes’ highly accessible control capability is operating as expected.

Checking on the pods’ status

Checking on the pods’ status

4. To get a list of all available services, use the kubectl get service command.

Kubernetes has launched a new service, as seen below, and the load balancer’s DNS name has changed. get service kubectl

A new service has been built by Kubernetes.

A new service has been built by Kubernetes.

5. Finally, copy and paste the DNS name of the load balancer into your browser. You will get the welcome page from NGINX as you did in the last step of the “EKS Cluster Application Deployment” section.

Conclusion

You’ve learnt how to set up an EKS cluster, deploy an NGINX service from your container, and test the highly available control plane capability during this course.

You should now have a solid grasp of how to set up EKS clusters in your AWS environment.

What’s next on your agenda? Maybe learn how to launch a NodeJS app on AWS using Docker and K8s?

The “eksctl create cluster in existing vpc” is a command-line tool that allows users to create a Kubernetes cluster with the AWS EKS CLI.

Related Tags

- aws eks create-cluster cli

- aws eks create-cluster example

- aws eks create node group

- aws eks cluster tutorial

- aws eks list-clusters